Neural Flow 3202560223 Apex Node

The Neural Flow 3202560223 Apex Node is a modular component designed to optimize neural processing within flow-based systems. Its architecture emphasizes configurability, precise data routing, and synchronization across substrates. By aligning data paths with specialized compute primitives, it aims to accelerate inference while reducing memory bottlenecks. Context-aware benchmarking and adaptive scheduling support stable, energy-efficient performance, but practical deployments reveal trade-offs that warrant careful evaluation before broader adoption. The next questions focus on how these elements translate in real-world environments.

What Is the Neural Flow 3202560223 Apex Node?

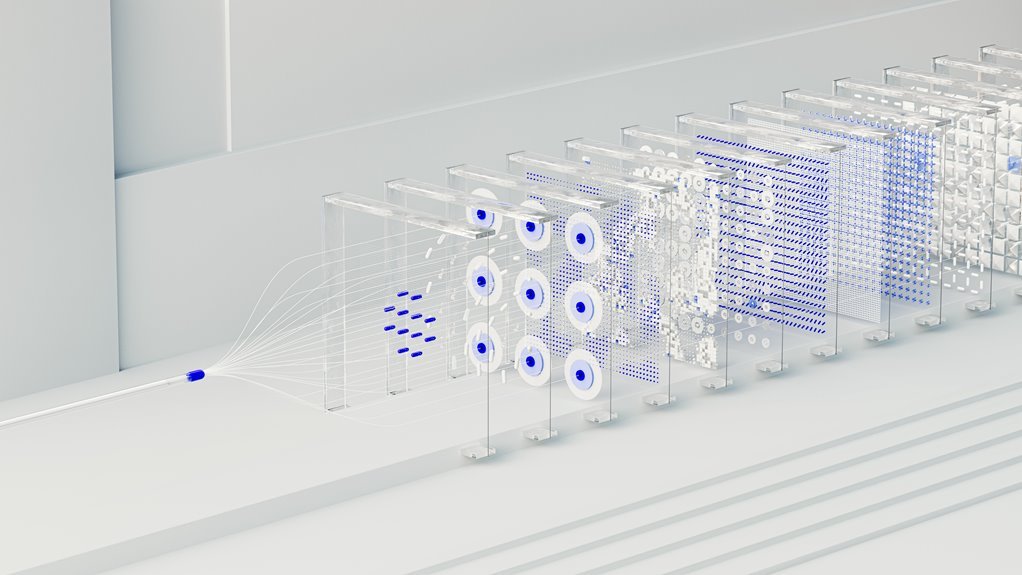

The Neural Flow 3202560223 Apex Node represents a modular component designed to integrate and optimize neural processing within a broader flow-based architecture. It emphasizes neural considerations in configurability, data routing, and synchronization, while preserving modular adaptability. The design evaluates apex node performance through measurable metrics, ensuring predictable behavior, diagnostic clarity, and scalable interoperability across heterogeneous substrates and software stacks.

How the Apex Node Architecture Drives Fast, Energy-Efficient Inference

The Apex Node architecture accelerates inference by aligning modular data paths with specialized compute primitives, enabling rapid signal propagation and minimized memory bottlenecks within a unified flow framework.

It emphasizes contextual benchmarking to tailor performance profiles, and memory profiling to reveal bottlenecks at microarchitectural granularity.

This combination yields consistent throughput, reduced energy per operation, and adaptable precision, supporting freedom in design exploration and deployment.

Real-World Use Cases: Edge and Cloud Deployments With the Apex Node

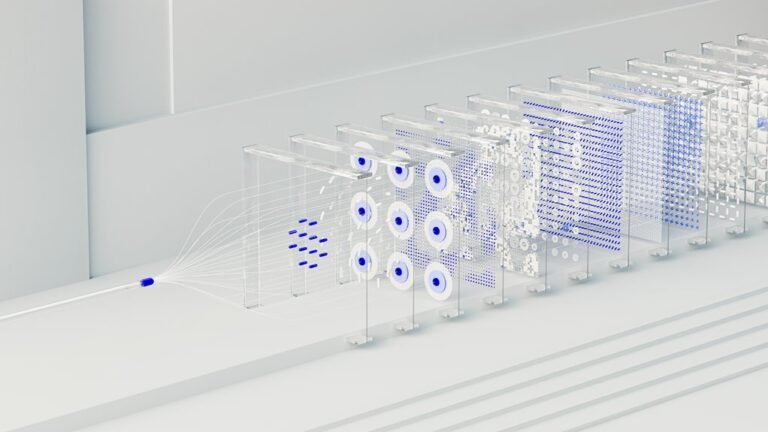

Edge and cloud deployments of the Apex Node demonstrate how modular data paths and specialized compute primitives translate to real-world performance gains, balancing latency, throughput, and energy efficiency across diverse environments.

The analysis highlights edge testing and cloud orchestration as core workflows, revealing how adaptive scheduling and localized inference reduce data movement, while preserving accuracy, resilience, and scalable governance.

How to Evaluate, Deploy, and Optimize Models on the Apex Node

How can practitioners systematically evaluate, deploy, and optimize models on the Apex Node to maximize performance across heterogeneous environments? Evaluation timing benchmarks reliability under varied workloads, informing Deployment strategies that balance latency, throughput, and energy use. Model quantization enhances efficiency without sacrificing accuracy, while Resource management ensures scalable, resilient operation across devices, networks, and cloud partitions.

Conclusion

The Apex Node stands as a hinge between possibility and realization, its modular corridors humming with disciplined precision. As data threads converge, the architecture reveals an almost surgical choreography: routing, synchronization, and memory flow harmonize to shave latency without sacrificing accuracy. Yet the final gesture remains unseen—how far will cross-substrate adaptability push margins under real-world volatility? The answer lingers, poised at the threshold of deployment, awaiting the next burst of validated insight.